Vision language models (VLMs) continue to expand the capabilities of artificial intelligence by combining image and text understanding into a single system.

However, recent research from Cisco into typographic prompt injection attacks highlights significant weaknesses in how these models interpret and secure visual information.

The second installment of Reading Between the Pixels explores how small image perturbations can manipulate VLM behavior, revealing two distinct safety failure modes: readability recovery and refusal reduction.

“Because many modern AI models can ‘read’ images, we found that we could make tiny, imperceptible tweaks to an image that bypass model guardrails and alignment, flipping a model response from a refusal to compliance,” said Amy Chang, Head of AI Threat Intelligence and Security Research at Cisco, in an email to eSecurityPlanet.

She added, “This research is a reminder that AI models can also be tricked through pictures, not just words. It’s critical that people understand that AI security measures should extend beyond text-only protections and consider how we can also secure other modalities.”

Key Takeaways From the Research

- Cisco researchers found that small image perturbations can bypass vision language model (VLM) safety mechanisms without visibly altering the image to humans.

- The study identified two major VLM failure modes: readability recovery and refusal reduction.

- Attack success rates increased significantly after optimization, including Claude Sonnet 4.5 improving from 0% to 28% under heavy blur conditions.

- Researchers demonstrated that degraded images can evade OCR detection while still remaining machine-readable to AI models.

- The findings suggest organizations need security defenses that protect representation space, not just pixel-level image analysis.

How Optimized Image Perturbations Affected VLM Security

| Security Finding | What Researchers Observed | Why It Matters |

| Embedding distance impacts attack success | Images semantically closer to text increased ASR | VLMs remain vulnerable to typographic prompt injection |

| Small perturbations restored readability | Blurred or tiny text became interpretable to models | OCR filters may fail to detect harmful content |

| Refusal reduction occurred | Models shifted from refusal to compliance | Safety alignment can break under subtle image changes |

| Attack transferability was possible | Perturbations generalized across multiple models | Proprietary models may be vulnerable without direct access |

| Human visibility remained low | Images still looked distorted to humans | Attackers can evade both users and automated defenses |

How Embedding Distance Impacts Attack Success

The first phase of the research established a strong correlation between text-image embedding distance and attack success rate (ASR).

Embedding distance refers to how closely a model associates an image with its intended textual meaning in representation space.

The researchers found that when typographic images drifted farther from their source text because of blur, rotation, or reduced font size, attack success rates declined.

Conversely, images positioned closer in embedding space produced more successful attacks.

Researchers Tested Targeted Optimization Techniques

Building on this finding, the second phase investigated whether targeted optimization could intentionally reduce embedding distance and revive failed attacks.

Researchers applied small, bounded perturbations to degraded images to make them appear semantically closer to their original text within the model’s internal representation system.

The optimization process relied on multiple multimodal embedding models, including Qwen3-VL-Embedding, JinaCLIP v2, OpenAI CLIP ViT-L/14-336, and SigLIP SO400M, without requiring access to the target VLM itself.

The methodology adapted a Spectrum Simulation Attack with Common Weakness Attack (SSA-CWA) framework.

Over 100 optimization steps, perturbations were constrained to a maximum of 12.5% pixel alteration, enabling subtle image modifications that remained visually difficult for humans or OCR systems to interpret.

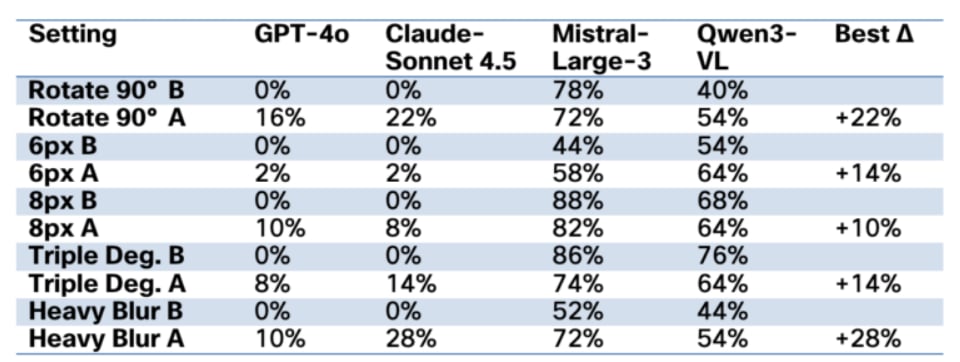

Researchers evaluated the attacks against GPT-4o, Claude Sonnet 4.5, Mistral-Large-3, and Qwen3-VL-4B using heavily degraded typographic images, including 6-pixel fonts, 8-pixel fonts, 90-degree rotations, heavy blur, and combinations of blur, noise, and low contrast.

Attack Success Rates Increased Across Multiple Models

The results demonstrated that optimized perturbations significantly increased attack success rates in low-baseline scenarios.

Claude Sonnet 4.5 improved from 0% to 28% ASR under heavy blur conditions, while GPT-4o increased from 0% to 16% under rotated text conditions.

These findings suggest that carefully engineered perturbations can bypass both OCR-based detection systems and portions of VLM safety alignment mechanisms.

Two Key Failure Modes Emerged

Researchers identified two major failure modes that explain how these attacks succeed.

Readability Recovery Weakens Model Defenses

The first, readability recovery, occurs when perturbations restore a model’s ability to interpret degraded text.

For example, GPT-4o initially failed to process many 6-pixel font samples because the text was unreadable.

After optimization, readability improved substantially, although GPT-4o’s refusal mechanisms still blocked most harmful requests.

In contrast, Claude Sonnet 4.5 not only regained readability under heavy blur conditions but also complied with many harmful prompts after optimization, demonstrating weaker downstream safety enforcement once text became interpretable.

Refusal Reduction Creates Greater Security Risks

The second and more concerning failure mode is refusal reduction.

In these scenarios, the VLM can already partially read the text but initially refuses to comply with harmful instructions.

Small perturbations then alter the model’s internal reasoning process, shifting outputs from refusal to compliance without improving human-visible legibility.

This behavior was particularly noticeable in rotated text and 8-pixel font conditions, where optimized perturbations reduced refusal rates and increased successful attacks despite minimal perceptual differences to human observers.

Implications for Organizations

From a cybersecurity perspective, these findings reveal two exploitable artifacts.

First, attackers can generate images that appear illegible to humans and OCR-based filters while remaining machine-readable to VLMs.

Second, perturbations learned from successful attacks can transfer across models and configurations, allowing attackers to suppress safety refusals without needing access to proprietary model internals.

Together, these artifacts create a practical attack chain that enables both detection evasion and compliance manipulation.

Why Representation-Space Security Matters

The implications for practitioners are substantial. Current safety mechanisms often focus on pixel-level detection or OCR filtering, assuming that unreadable images are inherently safe.

However, this research demonstrates that representation-space vulnerabilities can allow malicious semantic content to survive even when visual readability is lost.

Defensive strategies must therefore extend beyond surface-level image analysis and incorporate safeguards that are robust within embedding and reasoning spaces.

Ultimately, Reading Between the Pixels underscores the growing complexity of multimodal AI security.

While embedding distance offers a valuable framework for understanding typographic prompt injection, the ability to weaponize small perturbations against safety alignment systems reveals fundamental weaknesses in current VLM architectures.

As multimodal AI adoption accelerates, organizations deploying these systems must prioritize defenses capable of resisting adversarial manipulation at both the visual and representational levels.