AI Agents, Denominator Problems, and the New Authority Control Plane: Why Identity Governance Has to Grow Up Fast, and the Guy Who Can Get You There

If you have been in cybersecurity long enough to remember when identity was a sleepy compliance checkbox, you are exactly the audience for what Puneet Bhatnagar is worried about right now.

If you have been in cybersecurity long enough to remember when identity was a sleepy compliance checkbox, you are exactly the audience for what Puneet Bhatnagar is worried about right now.

Puneet describes himself as an identity security and cyber risk expert, someone who has spent almost two decades in the weeds of Identity Governance and Administration. In our conversation, you could hear both fatigue and excitement in his voice. Fatigue with the way enterprises still treat identity as an afterthought. Excitement because AI is finally forcing everyone to admit that the old model is cracking.

“Lot of the breaches that are occurring have some sort of a root or origin in mismanaged Identity Governance,” he says. That is the quiet part most boards prefer not to say out loud. We keep buying shiny threat intel feeds while our identity stack looks like a garage full of mismatched parts.

And now AI has rolled into that garage like a race car you are supposed to drive at 200 miles an hour on day one.

According to Puneet, if CISOs do not change how they think about identity, the combination of legacy access controls and autonomous AI agents is going to become the perfect storm.

AI for Security vs Security for AI

Puneet frames the current conversation in two lenses: security for AI and AI for security.

“There’s AI, there’s security for AI and there’s AI for security,” he says. “Security for AI is where a lot of the press and the conversations seem to be anchored around. There’s a lot of people talking about, hey, how do we secure AI? And that’s a super important question.”

If you went to RSAC or any major conference this year, you saw the security for AI story everywhere: model theft, prompt injection, data leakage. It is table stakes.

But Puneet argues the second lens is badly underdeveloped.

“You cannot fight like modern warfare with sticks and stones,” he says. “We need to leverage AI just as well to think about almost like evolved or new architecture that needs to then be created to start governing AI effectively and use cybersecurity to do that.”

In other words, AI is not just a new thing to protect. It is also the only realistic toolset we have to cope with the identity explosion it is causing.

Identity has been “the new perimeter” since 2012. How is that going?

If you feel like you have been hearing “identity is the new security perimeter” for a decade, you are not imagining it.

“When you go back to a lot of conversation has been around identity becoming the new security perimeter,” Puneet recalls. “When I was looking it up, 2012 is when that was first mentioned. Really 2012 is identity being the security perimeter.”

Twelve years later, most practitioners would struggle to say they actually have identity as their effective perimeter. The marketing slides got ahead of the implementation.

Despite all the buzzwords zero trust, identity perimeter, continuous access evaluation Puneet says enterprises still do not have true identity attack surface management.

“The first big gap I see is that we haven’t, despite all the buzz words around identity being the security perimeter – zero trust architecture and whatnot – we haven’t really gotten to the point as practitioners where we feel that the enterprises we represent are fully covered from an identity attack surface management perspective.”

Why? Because identity governance was boxed into the role auditors cared about, not the role attackers exploit.

“Traditionally, identity governance was seen more of as a compliance function. Hey, I need to pass my audits, get me clear on my SOX user access controls or HIPAA controls,” he says. “There was a certain scope that audits were limited to. It was maybe 15, 20 percent of your applications.”

The early IGA platforms built around that world did exactly what they were paid to do. They connected to a subset of key systems, made sure compliance reports came out the other end, and everyone chalked it up as a win.

From a threat perspective, that is a polite way of saying your denominator was always wrong.

The “denominator problem” and why coverage is the real control

Puneet calls this the denominator problem, and it is at the heart of his current thesis.

“If you think about SOC teams and security operations teams primarily relying on some form of control as a perimeter, you cannot rely on a control that doesn’t provide full coverage of your attack surface,” he explains.

He argues that identity teams have been governing a slice of the environment and pretending it is the whole. The result:

- Partial and incomplete attack surface coverage

- A false sense of comfort because audits “pass”

- A control fabric that attackers can route around

On top of that, the underlying technology landscape has never been friendly to broad, consistent identity governance.

“For single sign on, people rely on standards like SAML, OAuth and whatnot, but that’s identity verification,” he points out. “It’s not the access governance side. Access governance side, there are no standards. It’s the Wild Wild West. Someone calls it RBAC, someone calls it ABAC. And there is still an evolving area.”

For CISOs, that should sound familiar. Every major app has its own access model and permissions soup, and your IGA stack has to brute force its way into each one.

Puneet’s argument is that before you try to do anything sophisticated with AI, least privilege, or zero trust, you have to fix the denominator.

“We first need to think about what our denominator is, aka our attack surface, and start leveraging AI to build a complete coverage of that attack surface first,” he says. “It starts with that layer of deep, complete, rich identity observability.”

From governing access to governing decisions

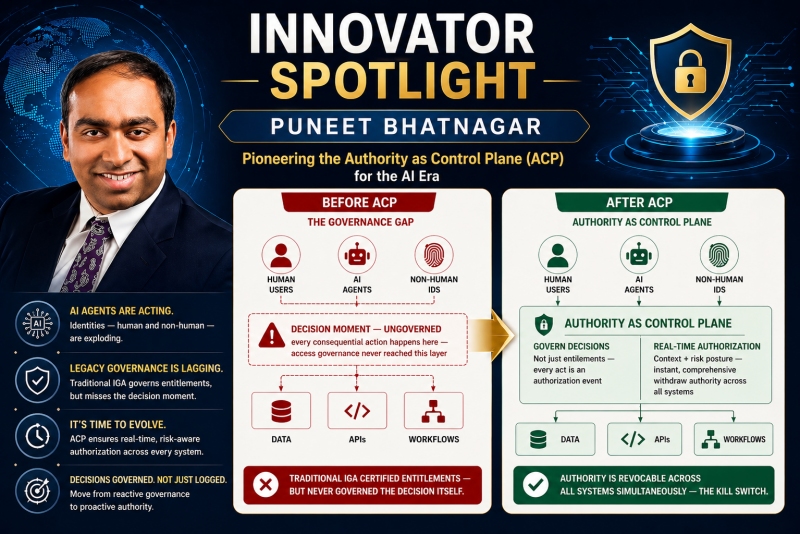

Here is where his thinking diverges from traditional IGA. Visibility and coverage are just part one.

Once you finally see your full identity attack surface, you run into an uncomfortable truth: all of your governance is aimed at what people are allowed to do, not what they actually do.

“All of this while what we have been really trying to govern is access, which is what people are allowed to do,” Puneet says. “It is not governing what people are actually doing. It’s not governing what people are actually using. In short, we are not governing decisions. We are governing access.”

That distinction used to be defensible because the decision making process was largely opaque and human. Managers certified access, people clicked through approvals, and only the outcomes were recorded.

“The reason we didn’t govern decisions before is because the decision sat in the human being’s head,” he notes. “The only thing that got recorded was the outcome. It was not the decision making process.”

AI is blowing that up. And not gently.

“Humans are delegating their authority,” he says. “We are just asking AI agents to assist us. We are actually delegating work to them. So they are going across multiple apps and workflows and silos. They are making a number of decisions. They are going from writing code to approving code to approving production code changes to pushing changes in production all across, just like human beings.”

If you are a board member or CISO and that sentence does not make you slightly nauseous, read it again.

Boards are already reacting.

“Boards are now increasingly worried,” Puneet says. “They are asking CISOs and CIOs, all right, do you really know where exactly your AI is acting, and what is that AI kill switch?”

That is the punchline. You cannot have an AI kill switch if you do not even know:

- Where AI agents exist

- What decisions they are taking

- Under what authority and context they are acting

“When you take a step back and you say that, hey, I need AI kill switch, I need to know where all my AI is acting, the underlying control plane or control fabric that really ties everything together is decisions or the authority,” he argues. “Authority is what we now need to govern.”

This is where his concept of an “authority control plane” comes in. You move from merely provisioning access rights to continuously verifying that a given agent, human or machine, is authorized to take a specific decision in a specific context.

“At that point in time when decisions are being made, is that agent authorized to make a decision?” he asks. “Is that agent making the decision under the right circumstances? Are they approving the right amount of access? Should they be allowed to do this?”

AI governance becomes a horizontal, not a silo

Puneet believes the next 6 to 12 months will be about redrawing lines inside enterprises.

“In what I mean by redrawing lines is like a lot of, because AI, it doesn’t sit in established verticals that we have already built around governance,” he says. “It goes horizontal.”

Instead of one more tool under the CISO, AI governance will become a cross function that drags multiple C level leaders to the same table.

“I think we will see some sort of AI governance narratives start to become more formalized in enterprises, which will require many tower heads or C level roles to work together,” Puneet predicts. “CISOs, divisional CTOs who care about developer experience, combined with some enterprise technology CTOs that are trying to deploy Copilot and other tools more securely, will need to come together and think through what does that horizontal AI Governance initiative look like?”

You can already see the market responding. Puneet points to a wave of well-funded startups trying to define this platform layer for AI governance, as well as incumbents racing to extend their offerings into this new territory.

“They are starting to think about creating not just a small wedge, but creating a platform level narrative around AI governance,” he explains. That includes “inventing those agents, being able to discover the agents, being able to monitor those agents, advanced level of logging and monitoring, and then extending that into the identity fabric itself.”

Runtime authorization for agents acting at machine speed

One of the more practical challenges he highlights is time. Humans may debate a risky change for days. AI agents do not.

“AI agents are going to act and execute much faster,” Puneet says. “They are not going to take days or weeks to discuss and deliberate and take human decisions. They are going to take decisions in the moment, and they are going to execute at machine speed. So you need a runtime architecture to support that, and today, IGA tools are not runtime based. They are more after the fact.”

If your current governance model is built on quarterly access reviews and nightly batch jobs, that is not going to scale to a world where agents can grant, use, and abuse access in seconds.

That is where he sees opportunity for both existing players and new entrants: a runtime, decision centric control plane that can answer, in real time, whether a given agent is allowed to take a given action right now.

On the incumbent side, he calls out vendors that come from the IGA space and are now reaching into adjacent domains like privileged access management, identity threat detection and response, and accelerated application onboarding. On the startup side, he points to firms experimenting with using AI to “learn” application access models from observed behavior, rather than building brittle connectors one by one.

Some newcomers are using techniques as simple sounding as watching a short video of how an application is administered and using AI to infer its underlying access model and data structures. It is the kind of thing that would have sounded like science fiction to an IGA implementation team ten years ago.

The cost side: agents, budgets, and cloud deja vu

It would not be a modern AI conversation without talking about cost explosions. Puneet sees strong parallels to the early days of cloud adoption.

He warns that as enterprises spin up agents with minimal guardrails, they risk the AI equivalent of the “surprise seven figure cloud bill.”

AI governance, in his view, has to include not just security and identity, but also financial operations.

“How do we make sure that we do not deploy agents in a way that we burn our entire year budget in one month,” he asks, drawing a direct line to “similar problems to what started coming up when cloud was new, when AWS was coming up and Azure was coming out.”

The same immature governance that leads to toxic combinations of privileges can also lead to toxic combinations of cost drivers. Invisible agents taking unlimited actions inside opaque billing models is not a recipe any CISO or CIO should be comfortable with.

What CISOs should actually do next

For CISOs and senior security leaders reading this, Puneet’s message is not to throw out your IGA stack and buy the first shiny AI governance platform you see on the show floor.

His narrative is more uncomfortable than that: your current identity controls were never designed for this environment, and it is time to be honest about that.

He argues you need to:

- Fix the denominator

Get clear on your real identity attack surface, not the 20 percent of systems that happen to be convenient for audit. Use AI where it helps to gain “deep, complete, rich identity observability” across humans, machine identities, and emerging AI agents. - Move from access centric to decision centric governance

Start treating decisions and delegated authority as first class citizens. Ask, for every high impact workflow, “Who or what is actually making the decision, under what context, and how do we prove they were authorized to do so at that moment?”

You will not find an off the shelf “authority control plane” that instantly solves this. But you can start moving your architecture in that direction, and you can start demanding that vendors talk in those terms rather than just rebranding yesterday’s permission model as “AI ready.”

A call to action for CISOs

If you are a CISO or senior security leader, here is the uncomfortable reality: AI agents are already in your environment, whether you approved them or not. Developers, business users, and vendors are delegating real authority to non human actors that can operate at machine speed across critical systems.

You do not get to opt out of governing that.

The next step is not another round of abstract strategy decks. It is concrete action:

- Map your identity attack surface and explicitly call out the gaps your current IGA and PAM tools do not see.

- Identify where AI agents, copilots, and automation are already taking decisions in your environment, not just assisting humans.

- Ask your current identity and security vendors how they will help you move toward runtime, decision centric governance, not just more after the fact reports.

- Pilot emerging solutions that can accelerate coverage of your “denominator” and give you real observability into where authority is being exercised.

Most importantly, drive a cross functional AI governance initiative that includes security, application owners, developer experience leaders, and finance. AI governance is horizontal. If it lives only under the CISO, it will fail.

Puneet’s core warning is simple: we cannot keep governing yesterday’s access lists while tomorrow’s agents are making today’s decisions. Identity as the new perimeter was a nice slogan in 2012. In the age of autonomous workers, it has to become an actual architecture.

For more information, contact Puneet Bhatnagar directly at [email protected].

About the Author

Pete Green is the CISO / CTO of Anvil Works, a ProCloud SaaS company and co-author of “The vCISO Playbook: How Virtual CISOs Deliver Enterprise-Grade Cybersecurity to Small and Medium Businesses (SMBs)”. With over 25 years of experience in information technology and cybersecurity, Pete is a seasoned and accomplished security practitioner.

Pete Green is the CISO / CTO of Anvil Works, a ProCloud SaaS company and co-author of “The vCISO Playbook: How Virtual CISOs Deliver Enterprise-Grade Cybersecurity to Small and Medium Businesses (SMBs)”. With over 25 years of experience in information technology and cybersecurity, Pete is a seasoned and accomplished security practitioner.

Throughout his career, he has held a wide range of technical and leadership roles, including LAN/WLAN Engineer, Threat Analyst, Security Project Manager, Security Architect, Cloud Security Architect, Principal Security Consultant, Director of IT, CTO, CEO, Virtual CISO, and CISO.

Pete has supported clients across numerous industries, including federal, state, and local government, as well as financial services, healthcare, food services, manufacturing, technology, transportation, and hospitality.

He holds a Master of Computer Information Systems in Information Security from Boston University, which is recognized as a National Center of Academic Excellence in Information Assurance / Cyber Defense (CAE IA/CD) by the NSA and DHS. He also holds a Master of Business Administration in Informatics.