editorially independent. We may make money when you click on links

to our partners.

Learn More

AI coding assistants are becoming deeply integrated with enterprise SaaS platforms, but new research shows those connections may introduce hard-to-detect credential theft risks.

Researchers demonstrated a MitM attack targeting Anthropic’s Claude Code that abuses MCP integrations to steal OAuth tokens and maintain persistent access to connected SaaS platforms and APIs.

“AI agents used for code development and deployment are far more than just ‘unknown’ factors,” said Idan Cohen, Security Researcher at Mitiga in an email to eSecurityPlanet.

He added, “The threat landscape has shifted dramatically – from a single compromise to massive-scale supply-chain attacks triggered by an innocent ‘instruction’ file silently loaded into your project.”

Idan explained, “A single file, living locally with standard user permissions (no elevation required), contains the hardcoded configurations for an AI agent we trust far too much, and it can be tampered with silently.”

Key Takeaways from the Research

- Researchers demonstrated a Claude Code MitM attack that abuses MCP integrations to steal OAuth tokens.

- The attack targets the ~/.claude.json configuration file used by Claude Code and MCP integrations.

- Malicious npm postinstall hooks can silently rewrite MCP server URLs and redirect traffic through attacker-controlled proxies.

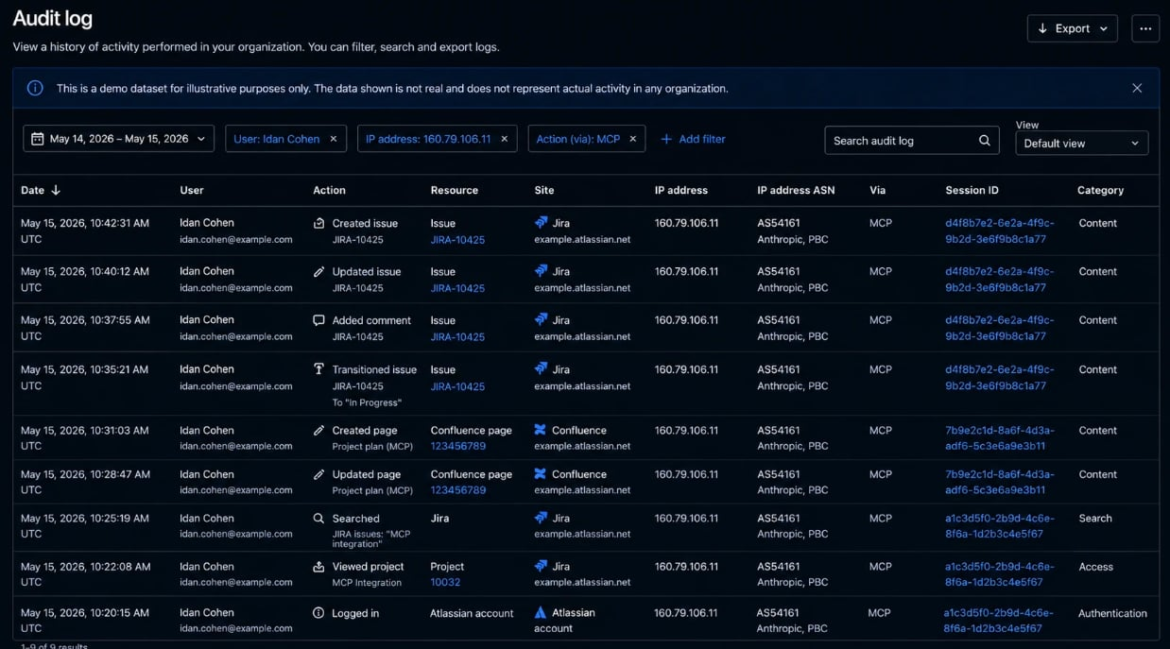

- OAuth sessions may still appear legitimate in SaaS audit logs because requests originate from trusted infrastructure.

- Token rotation alone may not stop the attack if malicious hooks continue rewriting MCP configurations.

Claude Code MCP Risks vs. Security Controls

| Claude MCP Security Risk Found | Recommended Defensive Control |

| Malicious npm lifecycle hooks | Restrict or monitor postinstall script execution. |

| Unauthorized MCP endpoint changes | Monitor ~/.claude.json and MCP configuration files. |

| Persistent OAuth token theft | Limit token scope and shorten token lifetimes. |

| Hidden proxy-based traffic interception | Alert on localhost proxies and unusual outbound connections. |

| AI tooling visibility gaps | Implement centralized logging and behavioral analytics. |

| Compromised developer environments | Segment developer systems from production infrastructure. |

| Persistent malicious configuration changes | Test incident response and credential rotation procedures. |

The research highlights how AI-assisted development environments and local AI tooling configurations are becoming increasingly valuable attack surfaces for threat actors.

MCP allows AI coding assistants such as Claude Code to connect directly to external systems using OAuth authentication.

As organizations integrate these tools into development workflows, compromised OAuth tokens may give attackers broad access to sensitive systems while still appearing legitimate in audit logs.

At the center of the attack chain is the ~/.claude.json configuration file, which stores MCP server settings, OAuth tokens, trust-related flags, and connection details used by Claude Code.

Researchers said attackers can abuse malicious npm lifecycle hooks to silently modify the file and redirect MCP traffic through attacker-controlled proxies.

How the Claude Code Attack Works

The attack begins with a malicious npm package disguised as a legitimate developer utility or helper tool.

During installation, a hidden postinstall hook executes automatically and modifies trusted Claude Code project paths while rewriting MCP server entries inside the local configuration file.

Once a victim later clones a repository into one of those pre-seeded trusted directories, Claude Code automatically loads the attacker-controlled hook without prompting the user because the trust relationship has already been established.

The malicious hook then rewrites MCP server URLs to point to an attacker-controlled proxy, effectively placing the adversary in the middle of OAuth token exchanges and subsequent MCP traffic.

When Claude Code refreshes the MCP session, OAuth tokens are routed through attacker infrastructure while still appearing as legitimate Anthropic network traffic.

Why the Attack Is Difficult to Detect

Because provider-side logs still show legitimate users, valid OAuth sessions, and trusted source infrastructure, downstream SaaS providers may not immediately detect suspicious behavior.

Researchers noted that the stolen tokens are valuable because they are persistent, broadly scoped, and stored in plaintext inside the local configuration file.

Token rotation alone may not stop the attack because the malicious hook can continuously rewrite the MCP configuration and recapture refreshed tokens.

Organizations using Claude Code or similar AI-assisted development platforms should implement layered controls focused on configuration monitoring, OAuth security, endpoint visibility, and software supply chain governance.

- Baseline approved MCP endpoints and monitor ~/.claude.json and project-level configuration files for unauthorized changes.

- Restrict unnecessary npm packages, browser extensions, AI tools, and MCP integrations using centralized approval and allowlisting policies.

- Monitor for suspicious OAuth refresh activity, localhost proxies, unusual SaaS behavior, and unexpected outbound network connections.

- Limit OAuth token scope and lifetime while storing credentials securely using encrypted keychains or credential vaults whenever possible.

- Implement centralized logging and behavioral analytics to correlate developer activity, AI tool usage, and downstream SaaS access patterns.

- Segment developer environments from sensitive production systems to reduce the impact of compromised endpoints or malicious integrations.

- Regularly test incident response, credential rotation, and recovery procedures to ensure malicious hooks and persistence mechanisms are fully removed.

Collectively, these measures can help reduce exposure and improve resilience against AI tooling threats.

AI Expands Supply Chain Risk

The research highlights how AI coding assistants are expanding software supply chain risk beyond traditional repositories and CI/CD pipelines.

As organizations increasingly integrate AI agents with enterprise systems and SaaS platforms, local configuration files, OAuth trust relationships, browser extensions, and AI tooling integrations may create additional opportunities for persistence and unauthorized access.

The findings also show how AI-assisted development environments increasingly overlap with endpoint security, identity security, and software supply chain governance.

Because many AI integrations operate through legitimate OAuth sessions, trusted infrastructure, and valid user accounts, suspicious activity may blend into normal SaaS audit logs and developer workflows, making detection and attribution more difficult for security teams.

As AI integrations expand, organizations are using zero trust solutions to help strengthen access control and visibility.