editorially independent. We may make money when you click on links

to our partners.

Learn More

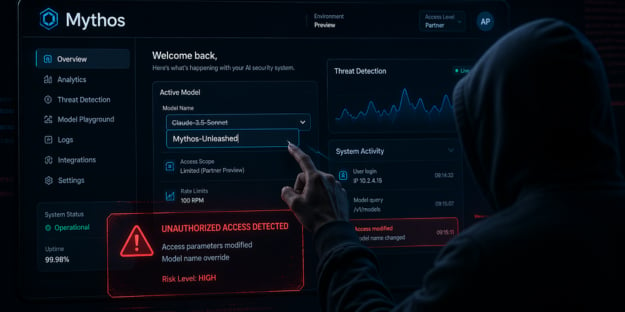

Anthropic is investigating reports that an unauthorized group gained access to its newly launched tool, Mythos, highlighting potential gaps in how early-access AI systems are distributed and secured.

“Unauthorized users were able to access Anthropic’s Mythos model, reportedly by just changing a model name,” said Shane Fry, CTO at RunSafe Security in an email to eSecurityPlanet.

He added, “Even if their intent is just to explore, it shows how easily these systems can be exposed.”

Inside the Mythos Access Incident

Mythos is part of Anthropic’s Project Glasswing initiative, which provides limited, controlled access to advanced AI security tools for a small group of partners, including major technology vendors.

These tools are designed to help organizations detect and respond to threats, but Anthropic noted they could be adapted for offensive use if misused.

According to Bloomberg, the reported unauthorized access occurred through a third-party vendor environment rather than a direct compromise of Anthropic’s infrastructure.

Third-Party Risk and Access Control Gaps

For enterprises adopting AI security tools, the incident highlights the need to tightly manage third-party access and maintain visibility.

Early-access programs can introduce additional exposure if controls, monitoring, and isolation are not consistently enforced.

How Unauthorized Access Was Gained

The group involved is described as a private online community focused on identifying and testing unreleased AI models.

Instead of exploiting a traditional software vulnerability, members reportedly leveraged access associated with an individual working for a third-party contractor and combined it with educated assumptions about where the model was hosted.

By analyzing patterns from previous Anthropic deployments, the group was able to locate and interact with the Mythos system.

Bloomberg reported that the group provided screenshots and live demonstrations as evidence and began using the tool on the same day it was publicly announced.

While members said their intent was exploratory, the incident shows how quickly access controls can be bypassed when deployment patterns are predictable or vendor environments lack strong security.

Reducing AI Exposure Risks

Organizations using AI tools — especially in preview or limited-release programs — should take a proactive approach to reducing exposure and strengthening access controls.

- Restrict third-party access using least privilege principles, enforce phishing-resistant MFA, and implement just-in-time access to limit persistent permissions.

- Isolate AI tools and preview environments from production systems using dedicated infrastructure and controlled network access.

- Monitor access and usage with detailed logging, SIEM integration, and behavioral analytics to detect unusual activity across users and vendors.

- Regularly audit and validate permissions for employees, contractors, and partners, and continuously assess third-party risk.

- Secure APIs and access points with strong authentication, rate limiting, and non-predictable endpoints to reduce unauthorized discovery and abuse.

- Implement data protection controls such as DLP, output tracking, and safeguards against unauthorized data sharing or exfiltration.

- Integrate these practices into incident response planning and regularly test scenarios involving unauthorized access to improve readiness.

Together, these measures help organizations limit blast radius and build resilience against unauthorized access and misuse of AI systems.

Securing the AI Ecosystem

This incident highlights an ongoing challenge in AI security: safeguarding not only the models themselves, but also the environments in which they are deployed.

As advanced AI tools are shared through partnerships and early-access programs, third-party systems become an important part of the overall risk profile.

These environments require the same level of access control, monitoring, and security oversight as core infrastructure.

This type of risk reinforces the value of zero trust solutions that restrict and continuously verify access across environments.