As artificial intelligence (AI) adoption accelerates across organizations, security leaders are struggling to keep governance frameworks aligned with how quickly employees are integrating AI into daily workflows.

According to Matt Warner, CEO and co-founder of Blumira, small and mid-sized business (SMB) organizations are facing growing pressure to enable AI innovation while simultaneously maintaining governance, compliance, and security controls.

“AI adoption is growing at a rate that I don’t think anyone is ready for,” Warner said during a recent discussion with eSecurityPlanet on AI governance and operational security.

He noted that improvements in large language models (LLMs), particularly recent advancements from OpenAI and Anthropic, have increased user confidence and accelerated adoption across organizations.

SMB and AI Governance Key Takeaways

- SMBs are adopting AI faster than many organizations can effectively govern and secure it.

- Restrictive AI policies can unintentionally increase shadow AI and shadow IT risks across organizations.

- AI governance should be led by technical leadership, not treated solely as a compliance initiative.

- Many SMBs lack the staff and resources needed to securely deploy AI at enterprise scale.

- Organizations are seeing the most success by starting with small, controlled AI deployments and approved platforms.

SMB AI Governance Challenges and Practical Solutions

| SMB AI Governance Challenge | Recommended SMB Approach |

| Employees using unauthorized AI tools | Standardize on approved AI platforms and provide guidance. |

| Limited IT and security staff | Start with small AI deployments and controlled environments. |

| Shadow AI and policy bypassing | Focus on usability instead of blanket AI bans. |

| Risk of exposing sensitive business data | Review SharePoint, Microsoft 365, and Google Workspace permissions. |

| Lack of governance ownership | Assign AI governance oversight to technical leadership. |

| Difficulty validating AI outputs | Use AI-driven testing and monitoring for governance controls. |

| Rapid AI adoption across teams | Continuously review how employees are using AI tools. |

Why SMBs Struggle With AI Governance

One of the core challenges organizations face is balancing security controls with usability.

Warner explained that while large enterprises are already using AI agents in SOCs to automate workflows, many smaller organizations lack the staff and resources needed to deploy AI securely at scale.

“If you’re a large county or a smaller organization, you may only have one or two IT people supporting hundreds of thousands of users,” Warner said.

He added, “Their focus is broader. They don’t necessarily have time to sit down and build AI efficiencies into workflows.”

According to Warner, this resource gap is creating a widening divide between large enterprises and the “99% of everyone else” in the SMB and mid-market space.

While enterprise organizations can dedicate teams to configuring AI agents, integrating platforms like Microsoft Sentinel, and automating security operations, smaller organizations are often still focused on basic operational security and governance concerns.

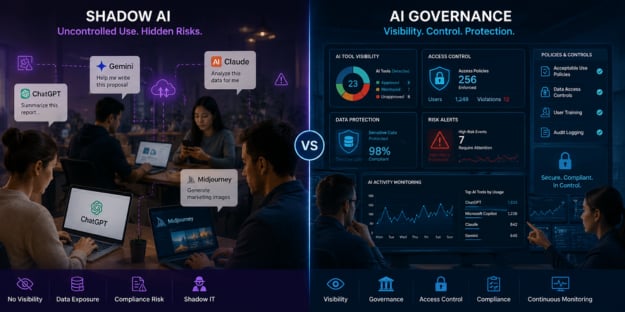

At the same time, organizations that attempt to restrict AI usage entirely may unintentionally create shadow AI problems.

Warner noted that employees frequently find ways around restrictive AI policies when organizations fail to provide approved alternatives or clear guidance.

“We’re seeing the old days of shadow IT, but now it’s everyone,” Warner explained. “If organizations block one AI platform without providing a usable solution, employees will find another way to use it.”

Start AI Governance With Visibility

Rather than implementing blanket AI bans, Warner recommends organizations begin by understanding how employees are already using AI tools across departments.

According to him, the first practical step is selecting approved AI platforms and openly discussing usage patterns with teams.

“What are people doing today? What AI are they using? What problems are they trying to solve?” Warner said. “You need to understand how people are already using AI before you can govern it effectively.”

Warner cautioned that integrating AI platforms directly into existing environments such as Microsoft 365 or Google Workspace can unintentionally expose sensitive data if organizations lack proper access controls.

He specifically highlighted SharePoint permissions as an area where organizations may accidentally expose more information than intended when deploying AI copilots and agents.

According to Warner, successful organizations typically start small by implementing AI within controlled “walled garden” environments and gradually expanding adoption over time.

This allows teams to identify friction points, validate governance controls, and better understand how AI systems interact with organizational data.

5 Practical Steps SMBs Can Take to Improve AI Governance

For SMBs and mid-market organizations, AI governance does not need to begin with complex enterprise frameworks or large security teams. According to Warner, organizations often see the most success by starting with smaller, controlled implementations that balance usability with security oversight.

Here are five practical steps SMBs can take to improve AI governance:

- Identify which AI tools employees are already using across departments and understand the business problems they are trying to solve.

- Standardize on approved AI platforms rather than allowing uncontrolled use of multiple public AI services across the organization.

- Review data access permissions within platforms such as Microsoft 365, SharePoint, and Google Workspace before integrating AI copilots or agents.

- Start with small, controlled AI deployments inside “walled garden” environments before expanding usage across the organization.

- Assign technical leadership ownership to oversee AI governance, validate controls, reduce friction, and align AI adoption with business objectives.

While these foundational steps can help reduce AI risk, Warner emphasized that long-term AI governance success ultimately depends on strong technical leadership.

AI Governance Starts With Technical Leadership

Warner also emphasized that AI governance should not be treated solely as a compliance or engineering problem.

Instead, he believes AI governance must be owned and driven by technical leadership, including CTOs, CISOs, and heads of engineering.

“If you make it purely a compliance issue, what you lose is the ability to make employees more effective,” Warner said. “AI governance has to align with how the business wants to improve, grow, and move faster.”

According to Warner, technical leadership is best positioned to balance security, governance, operational efficiency, and user enablement.

Without executive-level technical ownership, organizations risk creating overly restrictive policies that employees ultimately bypass.

He also noted that many organizations are now leveraging AI itself to validate governance controls and monitor AI system behavior.

Some organizations use multi-agent AI models to test data access boundaries, validate outputs, and measure hallucination rates automatically.

“We’re seeing organizations use AI to judge AI,” Warner explained. “That’s becoming one of the most effective ways to validate outputs and continuously test governance controls.”

AI Adoption Is Outpacing Governance

The conversation around AI governance is rapidly evolving beyond traditional compliance discussions and becoming a broader operational leadership challenge.

As organizations increasingly adopt AI agents, copilots, and automated workflows, security teams are being forced to rethink long-standing approaches to governance, access management, and shadow IT.

For SMBs and mid-market organizations in particular, the challenge is no longer simply whether to adopt AI, but how to implement it responsibly without overwhelming already resource-constrained IT and security teams.

As AI adoption continues to expand, organizations are using zero trust solutions to help control access and reduce shadow IT and shadow AI risk.