editorially independent. We may make money when you click on links

to our partners.

Learn More

A leak involving Anthropic’s Claude Code has drawn attention from the cybersecurity and developer communities, exposing internal components of the AI coding agent and introducing potential risks for organizations.

“The significance of this leak is in what the code reveals about AI agent architecture. The leak exposed approximately 512,000 lines of TypeScript across roughly 1,900 source files,” said Jacob Krell, Senior Director of Secure AI Solutions & Cybersecurity at Suzu Labs in an email to eSecurityPlanet.

“The deeper risk here isn’t what was exposed, it’s what becomes possible. When AI coding agent internals are public, attackers can study how those agents interpret context, follow instructions, and make decisions,” said Vishal Agarwal, CTO of Averlon in an email to eSecurityPlanet.

Michael Bell, Founder & CEO at Suzu Labs added, “The finding that matters most for government and defense: the default telemetry collects device IDs, session data, email, org UUID, and process tree information on startup before the user types anything.”

Inside the Claude Code Source Leak

The incident originated from a packaging misconfiguration in Anthropic’s npm release of Claude Code, its terminal-based AI coding agent.

During the build process, a large JavaScript source map file was unintentionally included, exposing more than 500,000 lines of underlying TypeScript code.

This file contained sensitive implementation details such as orchestration logic, permission handling, and internal APIs.

Because the source map referenced a complete archive of the original code hosted in cloud storage, anyone who accessed the package could retrieve the full codebase.

Root Cause: Packaging and Build Oversight

At its core, the issue was caused by a failure to properly exclude source map files during packaging.

The runtime environment used by Anthropic automatically generated the map file, and without explicit exclusion rules, it was bundled into the public release.

This seemingly small oversight had outsized consequences, effectively turning a standard software update into a large-scale source code exposure event.

Rapid Spread and Loss of Containment

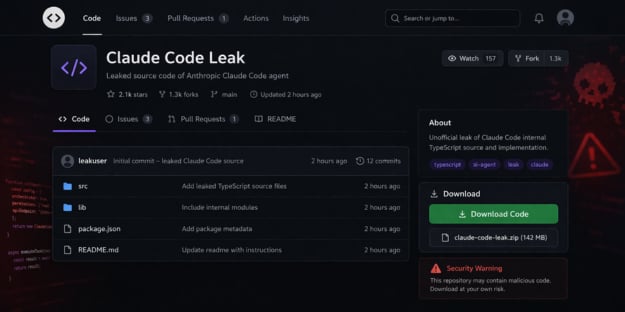

Once the leak was publicly identified, the code spread rapidly. Within hours, it was mirrored across GitHub and other platforms, accumulating thousands of forks and downloads.

This rapid redistribution made containment virtually impossible and ensured that both security researchers and threat actors gained access to the same internal insights simultaneously.

Security Risks for Organizations

The exposure has implications for organizations using or evaluating AI-assisted development tools.

Beyond the loss of intellectual property, the leak provides attackers with detailed visibility into how Claude Code operates, including its execution flows, permission models, and integration points.

This level of transparency lowers the barrier for identifying weaknesses, developing targeted exploits, and crafting malicious configurations that can abuse trusted workflows.

Threat actors were quick to capitalize on the situation as well.

Researchers at Zscaler observed malicious GitHub repositories posing as “leaked Claude Code,” designed to lure developers searching for the exposed project.

These repositories distributed malware such as Vidar, an information-stealing trojan, and GhostSocks, a tool used to proxy network traffic.

By leveraging social engineering and the heightened interest surrounding the leak, attackers increased the likelihood that developers would download and execute compromised files.

More broadly, the incident highlights how quickly a code leak can evolve into a supply chain security risk.

How to Reduce Supply Chain Risk

Organizations should take a layered and proactive approach to reduce the risks associated with leaked code and potential supply chain attacks.

- Avoid untrusted code by only using verified sources and enforcing repository trust policies for approved dependencies.

- Strengthen dependency security through version pinning, integrity checks, and the use of private artifact repositories.

- Monitor endpoints and networks for anomalous behavior, including unusual outbound traffic, DNS activity, or unauthorized processes.

- Scan development environments regularly with DevSecOps tools for malicious code, tampered packages, or suspicious configurations before execution.

- Restrict execution of AI agents and untrusted code by using sandboxed, isolated, or ephemeral environments with limited privileges.

- Harden developer workstations by removing local admin rights, enforcing application allowlisting, and securing secrets through centralized management and rotation.

- Adopt zero trust principles and test incident response plans for supply chain compromise and third-party risk scenarios.

Collectively, these measures help organizations limit the blast radius of potential compromises while building resilience against supply chain and AI-driven threats.

The Claude Code leak reflects a broader trend of AI tools becoming increasingly attractive targets within the software supply chain.

As these systems integrate more deeply into developer environments and production workflows, even small configuration errors can introduce meaningful security risks.

The timing of this incident alongside active supply chain threats highlights how multiple risk factors can overlap in modern development environments.

As AI adoption in development continues to grow, organizations should prioritize strong security practices around packaging, distribution, and dependency management to reduce potential exposure.

To better understand how to address these risks, it’s important to examine the broader challenges and best practices associated with software supply chain security.