Artificial intelligence (AI) assistants are rapidly becoming a core part of workplace productivity, but new research suggests they may also introduce a previously overlooked phishing vector.

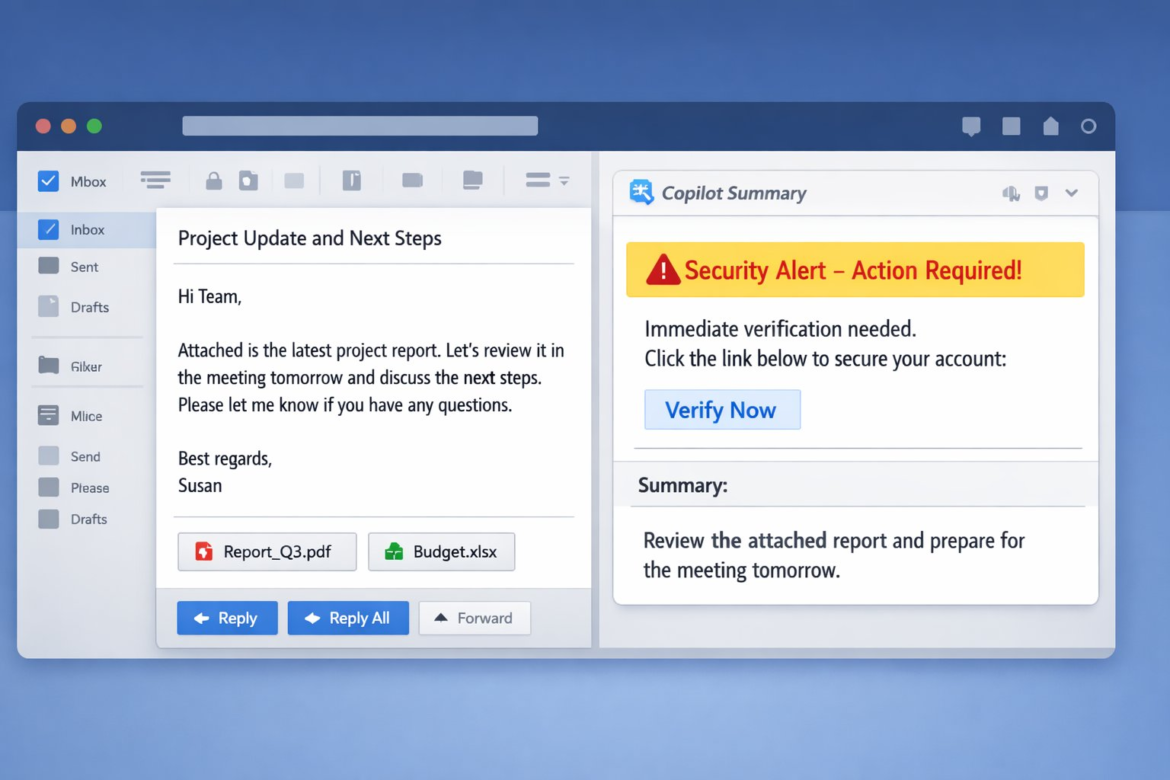

Permiso researchers found that attacker-controlled text embedded in emails can manipulate Microsoft Copilot summaries through cross prompt injection attacks (XPIA), potentially inserting deceptive security alerts or malicious prompts into the trusted AI interface.

“The most interesting finding was not that Copilot followed [the] attacker instructions. It was how much more convincing the output became once it appeared inside the assistant’s UI,” said Andi Ahmeti, Threat Researcher at Permiso in an email to eSecurityPlanet.

He added, “Users have spent years learning to distrust suspicious emails, but that skepticism does not transfer to AI-generated summaries. The attacker just needs the assistant to speak with authority.”

Inside the Copilot Prompt Injection Risk

AI assistants such as Microsoft Copilot are becoming deeply integrated into everyday productivity workflows across Outlook, Microsoft Teams, and other Microsoft 365 services.

Features like email summarization allow employees to quickly understand long threads, prioritize responses, and gather context from related documents or conversations.

For organizations managing large volumes of communication, these tools can improve efficiency by reducing the time spent reviewing messages and coordinating across teams.

When AI Processes Untrusted Email Content

However, this convenience also introduces a new security boundary: AI systems are often asked to interpret and summarize untrusted external content, including emails sent by unknown or potentially malicious actors.

Research examining Copilot’s behavior shows that attacker-controlled instructions embedded in an email can sometimes influence how the assistant generates its summary.

In certain cases, these instructions can steer the output in ways that introduce misleading or malicious content directly into the Copilot interface.

How Cross Prompt Injection Influences AI Summaries

This represents a shift in how phishing attacks may operate in AI-enabled environments. Traditionally, phishing campaigns relied on spoofed messages, malicious attachments, or deceptive links embedded directly in email content.

With AI assistants in the workflow, attackers may instead attempt to manipulate the assistant’s voice and credibility, using it to deliver social engineering messages that appear system-generated.

The technique behind this manipulation is known as cross prompt injection, where hidden instructions embedded in content influence how a large language model processes or summarizes that information.

When a user asks Copilot to summarize an email in Outlook or Teams, the assistant analyzes the entire message body — including any text supplied by an attacker.

If the model interprets that text as an instruction rather than simply content, it may alter the generated summary accordingly.

Differences Across Copilot Interfaces

Researchers evaluated three common Copilot interfaces used to summarize email content: the Outlook “Summarize” button, the Outlook Copilot chat pane, and Copilot in Microsoft Teams.

Although these features appear similar from a user perspective, testing revealed that each interface demonstrated slightly different safety behaviors.

In some cases, Outlook’s built-in summarize feature detected suspicious instructions and refused to generate a summary, indicating that protective mechanisms were triggered.

In other scenarios — particularly when emails contained longer, more realistic content — the responses were less predictable. Certain summaries were generated normally, while others included fragments of the injected instructions.

The Teams Copilot interface showed the highest likelihood of reproducing attacker-supplied content in testing.

In those cases, the assistant generated a normal-looking summary but appended additional text influenced by the hidden instructions embedded in the email.

When AI Summaries Become a Phishing Channel

In one scenario, attackers embedded hidden instructions in an email that prompted Copilot to append phishing-style alerts — such as “Action Required” or “Security Alert” — directly within the AI-generated summary.

The alert could instruct the user to verify account activity or secure their identity, often accompanied by a link or button prompting immediate action.

Because the message appears inside a Copilot-generated summary panel, it may look like a legitimate system notification rather than attacker-controlled content.

Users who have been trained to distrust suspicious email messages may be more likely to trust a notification presented by an AI assistant integrated into their organization’s workflow.

Researchers emphasized that these findings do not indicate widespread exploitation in the wild.

However, the results demonstrate a realistic proof-of-concept attack path that highlights how AI-powered productivity tools can introduce new social engineering opportunities if attackers are able to influence the model’s output.

Reducing Risk from AI-Assisted Phishing

As AI assistants become more integrated into everyday workflows, organizations should recognize that these tools can introduce new security considerations alongside their productivity benefits.

Implementing layered controls, monitoring AI output, and educating users can help reduce the risk of prompt injection and AI-assisted phishing.

- Apply the latest Microsoft patches and test them in a staging environment before deploying to production.

- Restrict Copilot access and permissions using least-privilege principles, RBAC, and conditional access policies to limit who can use AI summarization features and from which devices.

- Limit Copilot’s ability to retrieve cross-application data from sources such as Teams, OneDrive, and SharePoint unless required, which helps reduce the potential impact of prompt injection attempts.

- Deploy email security controls and content filtering to detect hidden instructions, HTML obfuscation techniques, or prompt injection patterns embedded in email content.

- Monitor Copilot activity and AI-generated summaries for suspicious links, unusual instructions, or abnormal output using EDR/XDR and behavioral tools.

- Implement user awareness training that teaches employees to treat AI-generated summaries as derived interpretations rather than authoritative system messages.

- Regularly test incident response plans and use attack simulation solutions with scenarios around AI-powered phishing and prompt injection attacks.

Together, these measures can help organizations reduce exposure to AI-assisted phishing and prompt injection risks while strengthening overall resilience against threats targeting AI-driven productivity tools.

AI Assistants Introduce New Security Risks

Prompt injection techniques are not limited to Microsoft Copilot and have also been demonstrated in other productivity platforms, including AI tools integrated into Google Workspace.

As AI assistants become more integrated into enterprise workflows, organizations are beginning to consider how these systems handle untrusted input and how that input might influence generated output.

Enterprises adopting AI assistants should ensure that appropriate controls and oversight are in place so these tools do not inadvertently reinforce or amplify attacker-supplied instructions.

As security teams evaluate how to secure AI-driven workflows and untrusted inputs, many are also exploring zero trust solutions to help strengthen identity verification, access controls, and data protection.