A newly published security audit by Trail of Bits reveals that WhatsApp’s privacy-preserving AI system initially contained multiple high-risk vulnerabilities that could have exposed user messages.

All identified issues were addressed by Meta prior to the new feature’s release.

The findings come from a comprehensive security assessment conducted in early 2025 and publicly disclosed on April 7, 2026. The review was carried out by six Trail of Bits consultants over several weeks, combining design analysis, infrastructure testing, and code review of WhatsApp’s “Private Processing” system, a confidential computing platform designed to enable AI features like message summarization without breaking end-to-end encryption.

Trail of Bits identified 28 issues in total, including 8 of high severity. These flaws affected core security guarantees of the system, including confidentiality, integrity, and resistance to insider threats. While Meta resolved most of them before launch, the audit highlights how subtle implementation gaps in Trusted Execution Environments (TEEs) can undermine their security promises.

WhatsApp, which serves more than two billion users globally, introduced Private Processing to reconcile a longstanding technical conflict: enabling AI features that require access to message content while preserving its strict end-to-end encryption model. To achieve this, Meta built an architecture based on AMD SEV-SNP-powered confidential virtual machines (CVMs) and NVIDIA confidential computing GPUs, in which decrypted data is processed within isolated hardware enclaves inaccessible even to Meta itself.

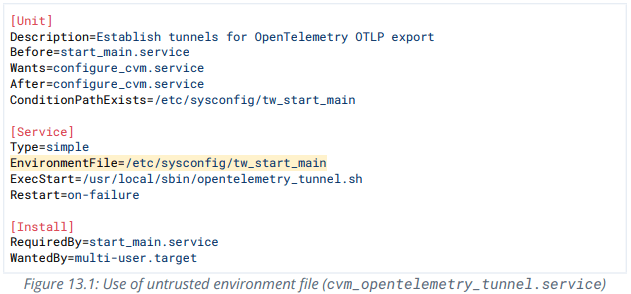

However, the audit demonstrates that this isolation is not foolproof. One of the most serious issues was allowing code injection via environment variables loaded after the system’s cryptographic measurement was taken. This meant that malicious code could run inside a supposedly “trusted” enclave without invalidating its attestation, potentially enabling silent data exfiltration.

Another critical flaw involved ACPI tables, low-level hardware configuration data that were not included in the system’s attestation. A compromised hypervisor could inject malicious virtual devices capable of reading sensitive memory, including user messages and encryption keys, while still appearing trustworthy to clients.

The audit also uncovered weaknesses in how the system verified security patch levels. Instead of validating firmware versions cryptographically, the system initially trusted self-reported values, allowing attackers running outdated, vulnerable firmware to masquerade as fully patched systems.

Perhaps the most impactful issue affected attestation “freshness.” Without binding attestations to individual sessions, attackers who compromised a system once could replay valid attestation data indefinitely, effectively creating a persistent backdoor. This could allow a rogue server to impersonate a legitimate WhatsApp processing node and silently collect user messages.

Meta addressed these problems with targeted fixes, including strict validation of environment variables, cryptographic verification of firmware versions, custom bootloaders for hardware integrity checks, and the addition of per-session nonces to prevent attestation replay attacks.

TrailofBits also highlighted risks stemming from metadata correlation and infrastructure design choices, such as the potential to target users by geographic routing patterns or correlate anonymous tokens with request timing. Other concerns relate to transparency, as fully reproducible builds of the secure environments remain difficult to achieve, limiting independent verification.

These findings echo earlier audit results published in 2025, which also concluded that WhatsApp’s AI system was fundamentally sound but dependent on complex trust assumptions and careful implementation.

If you liked this article, be sure to follow us on X/Twitter and also LinkedIn for more exclusive content.